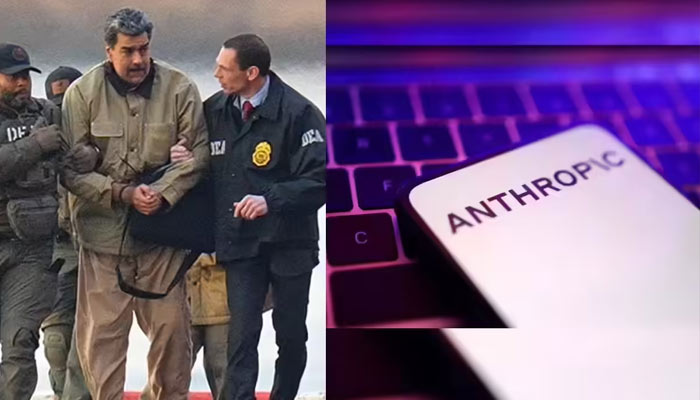

The U.S. military used an artificial intelligence system developed by Anthropic during an operation that led to the capture of former Venezuelan President Nicolás Maduro, according to a report by The Wall Street Journal.

The AI model, known as Claude, was reportedly deployed in a classified Pentagon setting — marking the first time an AI developer’s model was used in such an environment. Its involvement is seen as a significant milestone for the fast-growing AI sector, where military adoption is often viewed as a validation of technological credibility and commercial value.

The report said Claude was accessed through a partnership between Anthropic and Palantir Technologies, whose platforms are widely used by the U.S. Department of Defense. The exact function the AI system performed during the Venezuela operation has not been publicly detailed.

Maduro and his wife were captured in Caracas after multiple sites were bombed, according to the report.

Anthropic’s publicly stated usage policies prohibit Claude from facilitating violence, developing weapons or conducting surveillance. In a statement, a company spokesperson said any use of Claude — whether in the private sector or by government agencies — must comply with its guidelines, and that the firm works closely with partners to ensure adherence to its policies.

The reported deployment has reportedly created friction between Anthropic and U.S. defense officials. Concerns raised by the company over how its AI tools are being used have led some officials to consider cancelling contracts worth up to $200 million, the Journal said.

Anthropic Chief Executive Dario Amodei has previously called for stronger regulation and guardrails around artificial intelligence, warning against its use in autonomous lethal systems and domestic surveillance — issues that are understood to be central to ongoing discussions with the Pentagon.

Earlier this year, U.S. Defense Secretary Pete Hegseth said the Pentagon would not work with AI systems that “won’t allow you to fight wars,” referring to broader debates over operational flexibility and restrictions in military settings.

Anthropic signed its $200 million contract with the Defense Department last summer. While several AI companies are developing tools for U.S. military use, most systems operate on unclassified networks. Anthropic’s model is reportedly the only one currently accessible in classified environments through third-party platforms, though government users remain subject to the company’s stated usage policies.

Comments are closed.